Generative Artificial Intelligence is on the rise. In my meetings with executives from large companies, one question arises quite frequently:

“How can we use generative AI without suffering from the unpredictability of these models’ responses?”

Another very common question is:

“How is it possible to integrate generative AI with the specific set of data and knowledge already existing in our organization?”

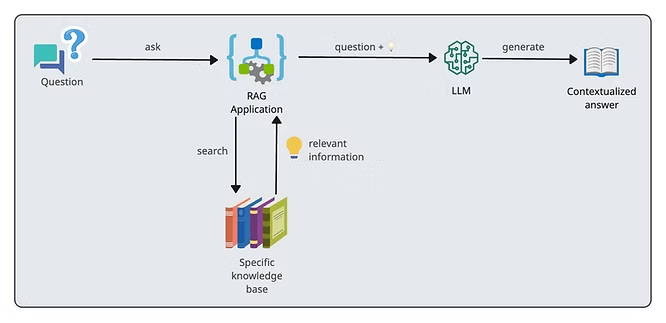

Faced with these questions, a technique has been gaining prominence: Retrieval-Augmented Generation (RAG).

Uncomplicating RAG

RAG empowers LLMs by integrating updated and organization-specific information into the response generation process. The result is virtual assistants that not only understand general questions but are also experts in the particular context of a company, providing precise and customized solutions.

A simple example that illustrates the application of this technique well is automated customer service.

Imagine you are chatting with a bank’s RAG-equipped chatbot. Instead of just giving you basic information about loans, this chatbot can directly consult the bank’s credit rules and its financial data. Thus, it can give you highly specific answers.

For example, if you ask about the possibility of getting a loan, the chatbot analyzes your financial history and the bank’s current norms to offer you details such as the interest rates you would pay and suggestions tailored specifically to you.

Practical Applications of RAG

Below I have listed some examples of real solutions that manage to take advantage of the capabilities of LLMs thanks to RAG:

- Personalized Customer Service: By integrating RAG, chatbots can access and apply information specific to company policies and customer data, drastically elevating the quality of service.

- Intelligent Knowledge Management: RAG makes it easier to access relevant information stored in the organization’s data repositories, making it possible to create different solutions that make employees’ work more efficient and productive.

- Advanced Data Analytics: In industries that depend on quick data-driven decisions, RAG allows for deep and rapid analyses of large volumes of data, providing essential operational and strategic insights in real-time.

- Personalized Legal Support: It is possible to use RAG to create intelligent assistants that supply adapted legal advice, based on legal precedents and specific company regulations.

- Supply Chain Optimization: RAG is applied to forecast issues, optimize logistics, and cut costs in complex supply chains, adapting dynamically to market and demand changes.

- Compliance and Risk Monitoring: RAG can also be crucial for solutions that monitor compliance with sector regulations, alerting to potential violations that can affect critical areas such as finance and healthcare.

Strategic Partnership with Databricks

Databricks has a full suite of tools designed to improve the implementation of AI applications, including Retrieval-Augmented Generation (RAG).

TreeID is an official Databricks partner and thanks to this I had the opportunity to test the platform.

Speaking specifically about RAG, a few features are particularly interesting:

- Real-time Data Access: Databricks provides solutions that allow for immediate integration and access to updated data, which are essential for accurate and personalized answers in AI applications such as advanced virtual assistants.

- Model Selection and Optimization: The platform offers resources that make choosing and using language models much easier, seamlessly integrating with the most used models in the corporate world, such as Azure OpenAI, AWS Bedrock, and Anthropic, to open source models like Llama 2 and MPT, but it also allows integration with fully personalized and fine-tuned models tailored to client’s needs.

- Quality and Security Assurance: With Databricks Lakehouse Monitoring, taking care of the quality and security of RAG applications becomes much simpler and more direct. This tool automatically checks applications’ responses searching for any problematic content, such as toxic or unsafe information. And through very intuitive dashboards, it is possible to track in real-time how the application is performing based on detailed metrics, such as the acceptance rate of AI’s recommendations.

All this makes it way easier when maintaining track of the IA’s behavior and performing quick tweaks to the application, assuring that the planned outcomes to the application are met, and ensuring compliance with corporate security and privacy norms.

The integration of these tools for developing applications with AI handles most of the typical technical challenges, allowing RAG applications to be developed faster and guaranteeing they are exact, current, and matched properly to the specific corporate context.

Conclusion

Thanks to techniques such as RAG, the use of generative AIs tends to keep increasing in the corporate environment, offering immense opportunities to maximize operational efficiency with the precision and security such an environment requires. Platforms like Databricks truly push this process along, whilst providing all the requisite governance structure for these kinds of applications.